I Talk to AI While I Code. Here's What Works, What Fails, and Where I Stop.

One UI 8.5 Is the Real Galaxy S26 Story Nobody's Talking About

Everyone's comparing camera specs and benchmarks. Meanwhile, Samsung quietly shipped the most ambiti...

Devesh Korde

March 25, 2026

I was reading about Nvidia's GTC announcements last week new chips, new partnerships, new records. Exciting stuff. Then I came across a number that stopped me cold.

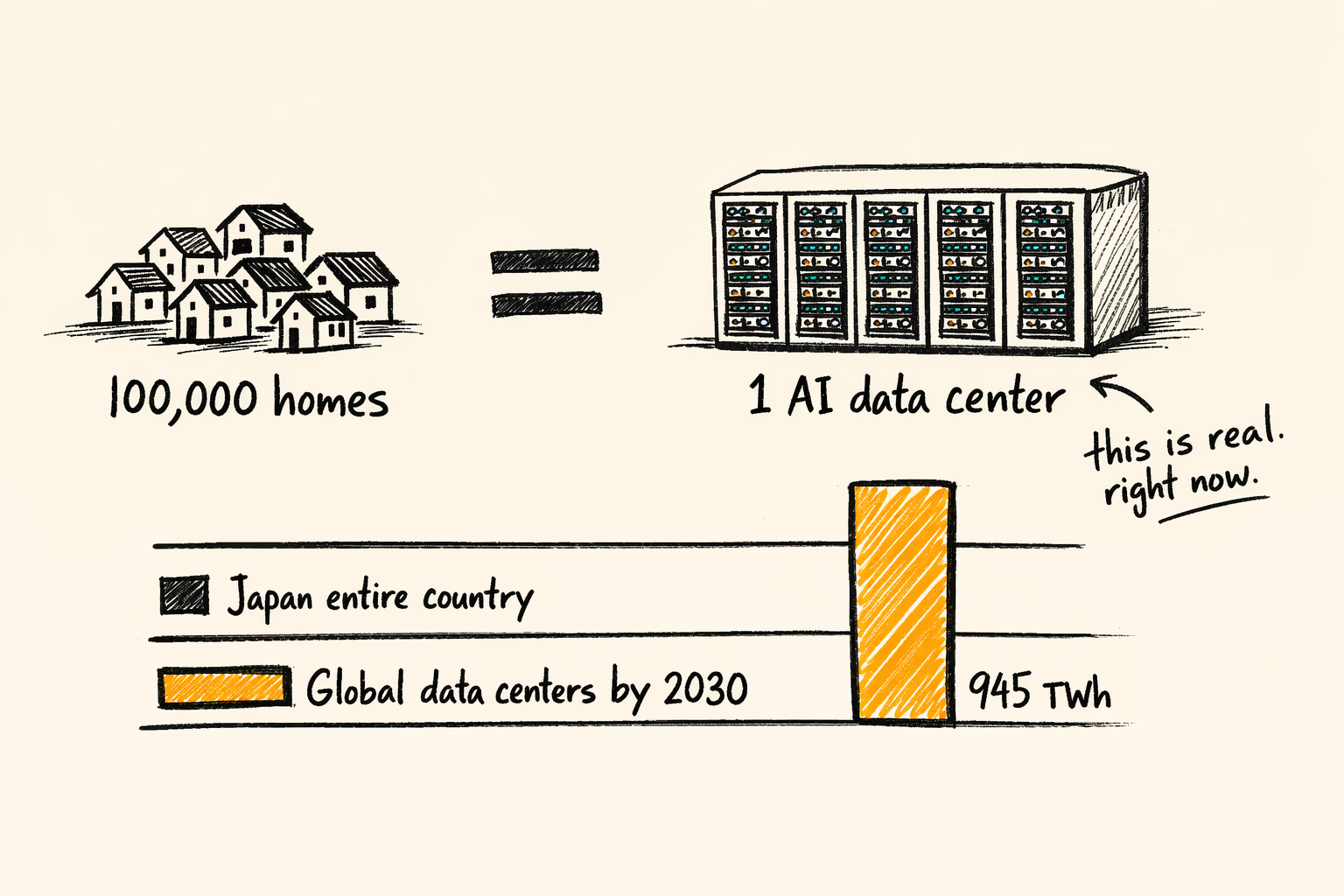

A single AI data center campus can consume more electricity than 100,000 homes.

That's not a typo. Not a projection for 2030. That's happening right now, in 2026, in places like northern Virginia where "Data Center Alley" already eats up 26% of the state's total electricity. And the people living near these campuses? Their power bills have gone up 42% since 2019.

Every time you ask ChatGPT a question, every time Copilot autocompletes your code, every time an AI model trains on another trillion tokens there's a physical cost. Electricity, water, heat. Real resources consumed in real places by real machines.

Nobody talks about this at product launches. But it's becoming the defining tension of the AI era.

Let me throw some data at you because this isn't a vibes argument. It's math.

The International Energy Agency released a report this year projecting that global data center electricity demand will more than double by 2030 reaching around 945 TWh. That's roughly the entire electricity consumption of Japan. AI is the primary driver.

In the United States alone, data centers are on track to account for nearly half of all electricity demand growth between now and 2030. Here's the part that hit me hardest: the US economy is projected to consume more electricity for processing data in 2030 than for manufacturing all energy-intensive goods combined including aluminium, steel, and chemicals.

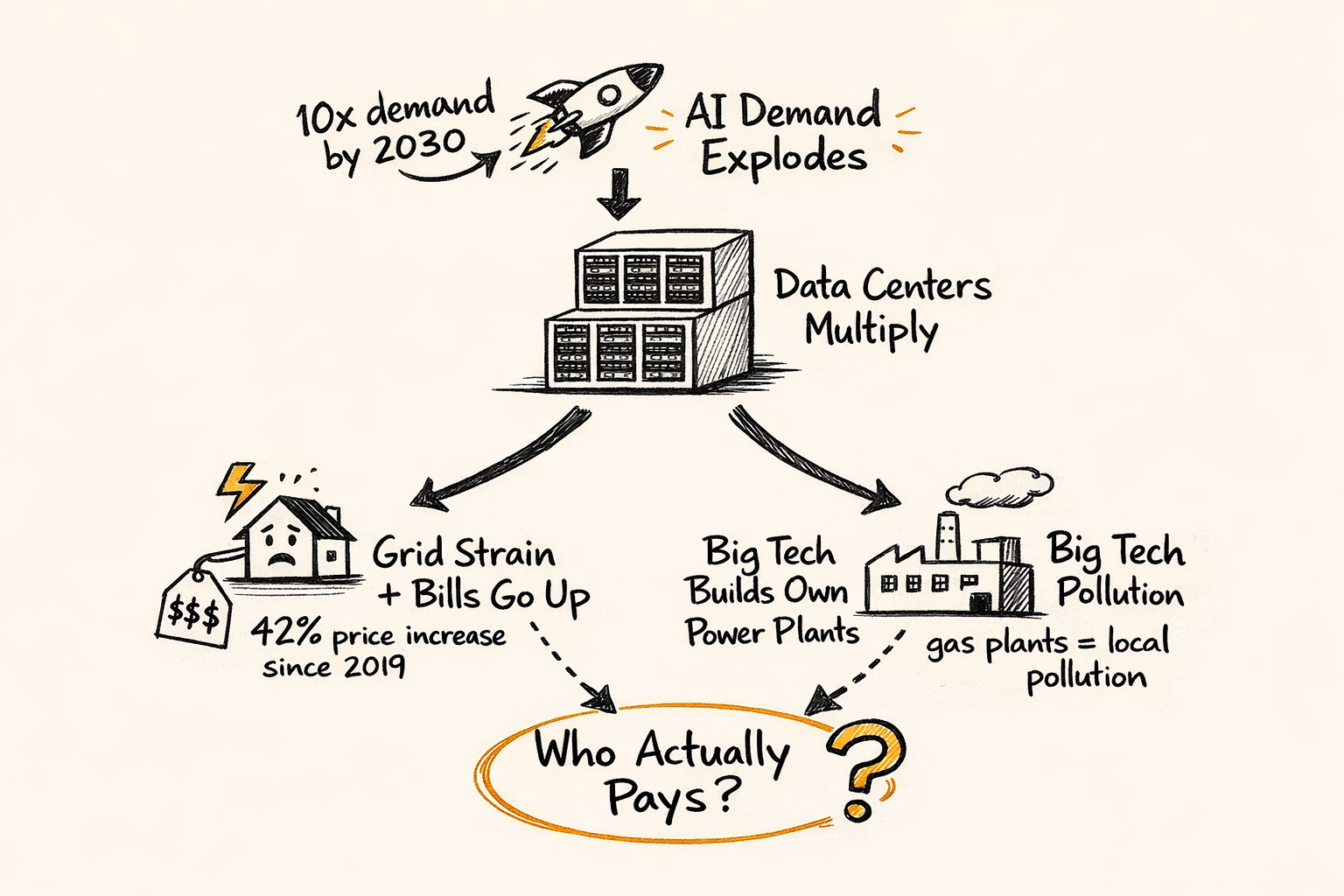

We went from "AI is software eating the world" to "AI is eating the power grid." And the grid wasn't built for this.

PJM Interconnection the largest US grid operator, serving 65 million people across 13 states is projecting it'll be six gigawatts short of reliability requirements by 2027. For context, that's roughly six nuclear power plants worth of missing capacity. The grid operator's president said he's never seen the system under this kind of projected strain.

Here's where it gets personal.

When a massive data center moves into your town and starts pulling gigawatts from the local grid, two things happen. First, the infrastructure needs upgrading transmission lines, substations, generation capacity. Second, that cost gets shared across everyone connected to the grid.

Retail electricity prices in the US have risen from 12.76 cents per kilowatt-hour in 2020 to 17.44 cents in February 2026. That's a 36% increase in six years. Goldman Sachs projected that data center power consumption alone will boost core inflation by 0.1% in both 2026 and 2027. Capacity market prices in PJM have spiked nearly tenfold.

Regular families in Ohio, Virginia, and Texas are paying higher electricity bills partly because a trillion-dollar company built a server farm next door. An energy policy researcher at the University of Pennsylvania put it simply when a single data center campus uses more power than a small city, the old model of splitting infrastructure costs evenly stops making sense.

There was even a near-miss blackout in Data Center Alley when a campus disconnected from the grid suddenly, creating a massive power surge that almost caused cascading outages across the region. Federal regulators said the grid was never designed to handle that kind of concentrated, volatile load.

Communities are pushing back. From rural Virginia to the Arizona desert, people who once welcomed tech investment are now protesting data centers. Politicians from Bernie Sanders to Ron DeSantis have raised concerns. Virginia, Georgia, Indiana, and Washington have all enacted or proposed laws requiring data center operators to fund infrastructure upgrades proportional to their electricity use.

The question is uncomfortable but fair: should middle-class families subsidize the electricity needs of the wealthiest industry in history?

At a White House event earlier this month, executives from Google, Microsoft, Meta, Oracle, Amazon, and OpenAI pledged to build or buy their own power generation for AI data centers rather than passing costs to consumers.

Sounds great on paper. Reality is messier.

The CEO of Cleanview, a market research firm tracking data centers and clean energy, pointed out that data centers aren't the only reason electricity prices have risen. The aging grid needs rebuilding. Wildfires and hurricanes have forced expensive repairs. Data centers are accelerating the problem, not causing it alone.

But the proposed solution Big Tech building their own natural gas plants creates a different problem entirely. Local air pollution. These aren't solar installations. They're gas-burning power plants sitting next to communities that didn't ask for them.

And going fully off-grid isn't realistic either. Powering a single large data center would require thousands of acres of solar panels. The grid is essential because it can transmit power from wind and solar farms hundreds of miles away. You can't just slap panels on a data center roof and call it green.

This is pushing nuclear power back into mainstream conversation. Multiple tech companies are exploring nuclear as the only carbon-free source that can deliver consistent, massive baseload power around the clock. Solar and wind are intermittent. Nuclear isn't. And a single nuclear plant can match the gigawatt-scale appetite of an AI campus.

You might be thinking interesting, but what does this have to do with me? I just write code.

More than you think.

Every API call to an LLM has a real energy cost. Every model you fine-tune, every RAG pipeline you build, every agentic workflow you deploy there's physical infrastructure burning electricity behind it. And that cost is showing up in pricing.

Micron just reported revenue of $23.86 billion, beating expectations, driven almost entirely by AI memory demand. They're boosting capital spending to $25 billion to keep up. But the AI boom's appetite for memory-rich server hardware is tightening supply for consumer products. Gamers and PC builders are already paying more because the AI industry is hoarding components.

If you're building products on top of AI inference, understand this: the cost of compute isn't going down. Energy is becoming the bottleneck, not model capability. The companies that win in the next few years won't just have the best models they'll have the best access to power.

For startups, the moat in AI is shifting. It's no longer just data or algorithms. It's infrastructure. It's having a deal with a power company. It's being in a geography where the grid can handle your load. It's planning for energy costs the same way you plan for cloud costs.

Germany is doubling its AI data center footprint by 2030, treating compute capacity as strategic national infrastructure. The US is debating chip export frameworks that tie hardware access to domestic data center investment. Singapore and South Korea just announced a $300 million AI alliance. AI infrastructure isn't just a business problem anymore it's geopolitics.

We're living through a strange moment. The most advanced technology humans have ever built is constrained by one of the oldest problems in industrial history: where does the power come from?

The AI companies talk about intelligence, reasoning, agents, superintelligence. The reality on the ground is transformers, substations, cooling towers, and utility commissions. It's a retired couple in Ohio whose electricity bill jumped because a data center moved into their county.

I'm not saying we should stop building AI. I use these tools every single day they make me a better developer. But I think we should be honest about the costs. Not just the API pricing page, but the real, physical, environmental costs that are being distributed across communities that never asked for a server farm.

The AI revolution has a power bill. And right now, we're all splitting it whether we signed up for it or not.