Angular Router Under the Hood: What Really Happens When You Type a URL

The Mid-Career Feeling Nobody in Tech Talks About

Not junior anxiety. Not senior burnout. The specific feeling at year three or four when you are good...

Devesh Korde

March 20, 2026

I have been thinking about something that nobody in tech wants to say out loud.

Not because it is controversial. Because it is uncomfortable in a way that hits closer to home than most tech debates do.

When I started using Claude regularly not just for experiments but as an actual part of how I work something shifted. Not in my output. My output got better. Something shifted in how I thought about myself.

The question I kept circling back to was simple and had no clean answer.

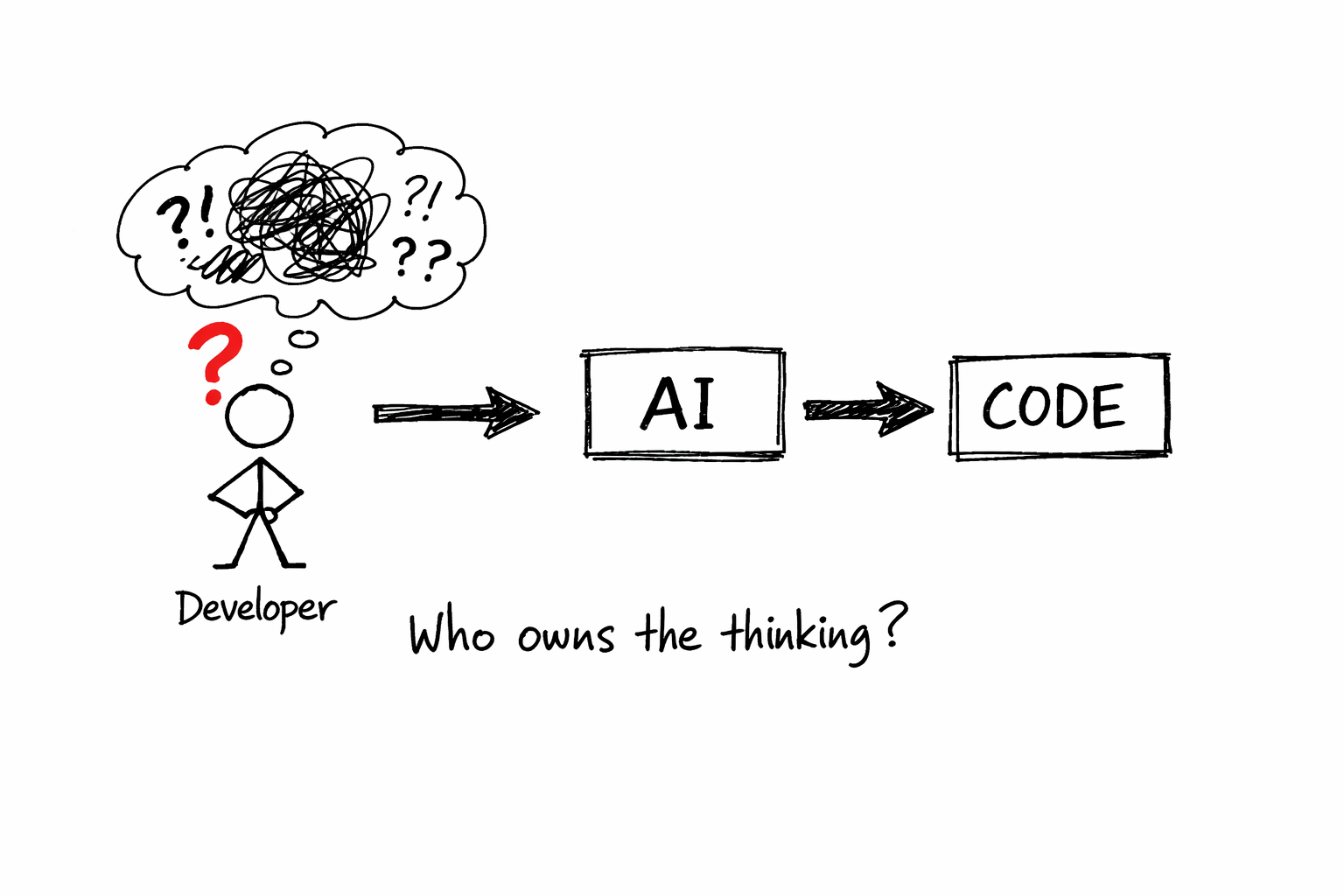

If a tool can do part of what I spent years learning to do, what does that make me?

Here is the thing nobody tells you when you are starting out. You think you are learning to write code. But what you are actually doing is building a mental model of how systems behave. You are learning to think in a particular way. To break a problem into smaller problems. To reason about state, about failure, about edge cases, about what happens when two things interact that were never supposed to meet.

The code is the output of that thinking. It was never the thinking itself.

I knew this intellectually. But emotionally, the code felt like proof. It was the artifact that said: I was here. I understood this. I built this.

AI changed the artifact problem. The code still gets written. My name is still on the commit. But now I am less sure how much of the thinking was mine.

I want to talk about someone I keep thinking about.

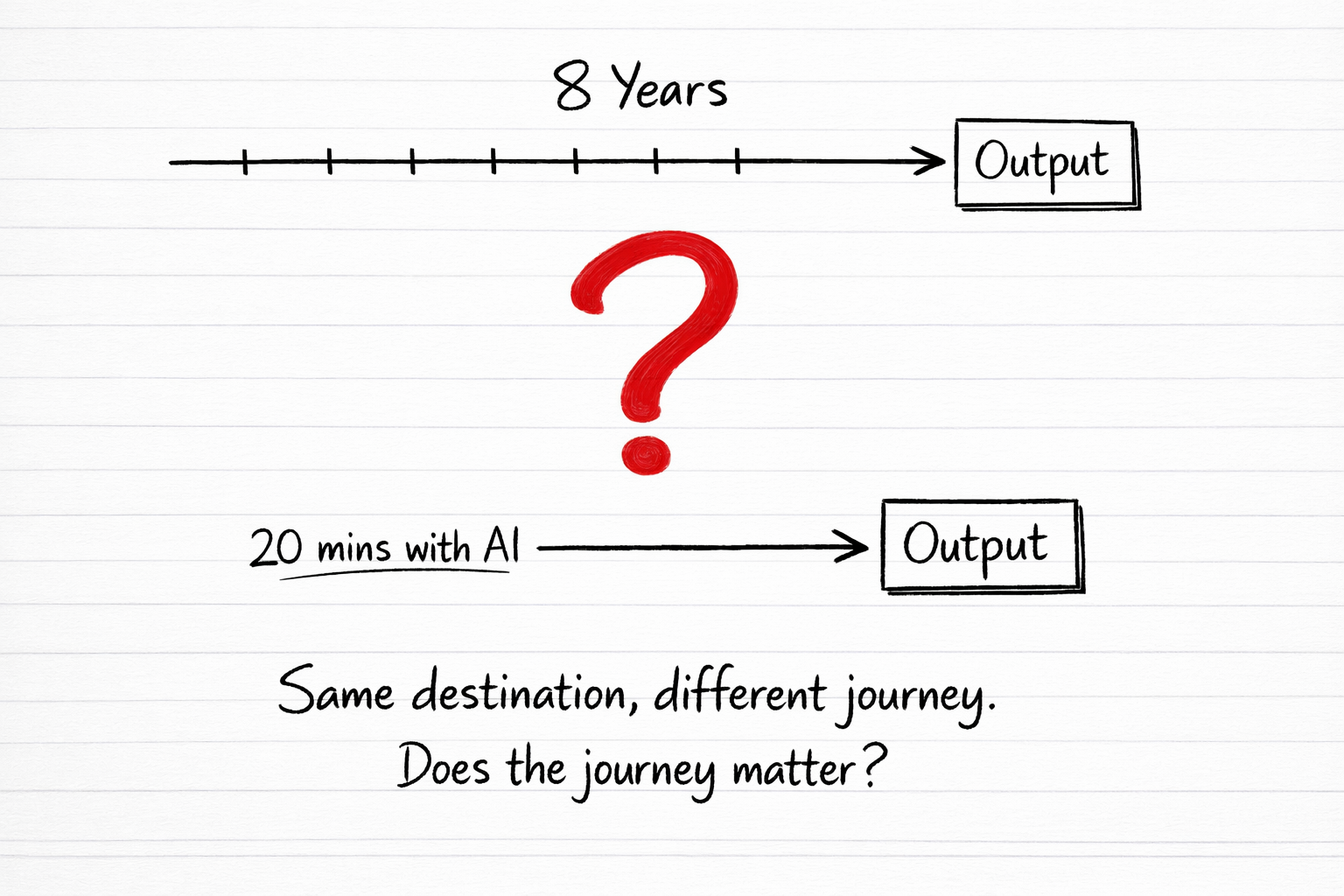

Imagine a developer who spent eight years getting really good at Angular. Not just using it. Understanding it. Reading the source code. Knowing why decisions were made. Building the mental model that lets them look at a bug and know within two minutes where it is hiding.

Eight years. Real years. Late nights, bad documentation, Stack Overflow at 2am, conference talks, side projects that went nowhere, arguments about architecture that sharpened their thinking.

Then a junior developer sits down with Claude and produces working Angular code in twenty minutes.

The senior watches this happen.

What does that senior feel?

I think the honest answer is not just threatened. I think they feel something that has no clean word in English. A kind of retroactive confusion. Like the ground shifted under years they already lived.

Did I waste that time? Was the struggle necessary or just the tax I had to pay before the shortcut existed?

The answer matters because it is not just about them. It is about what we tell the next generation of developers. It is about whether depth still means something.

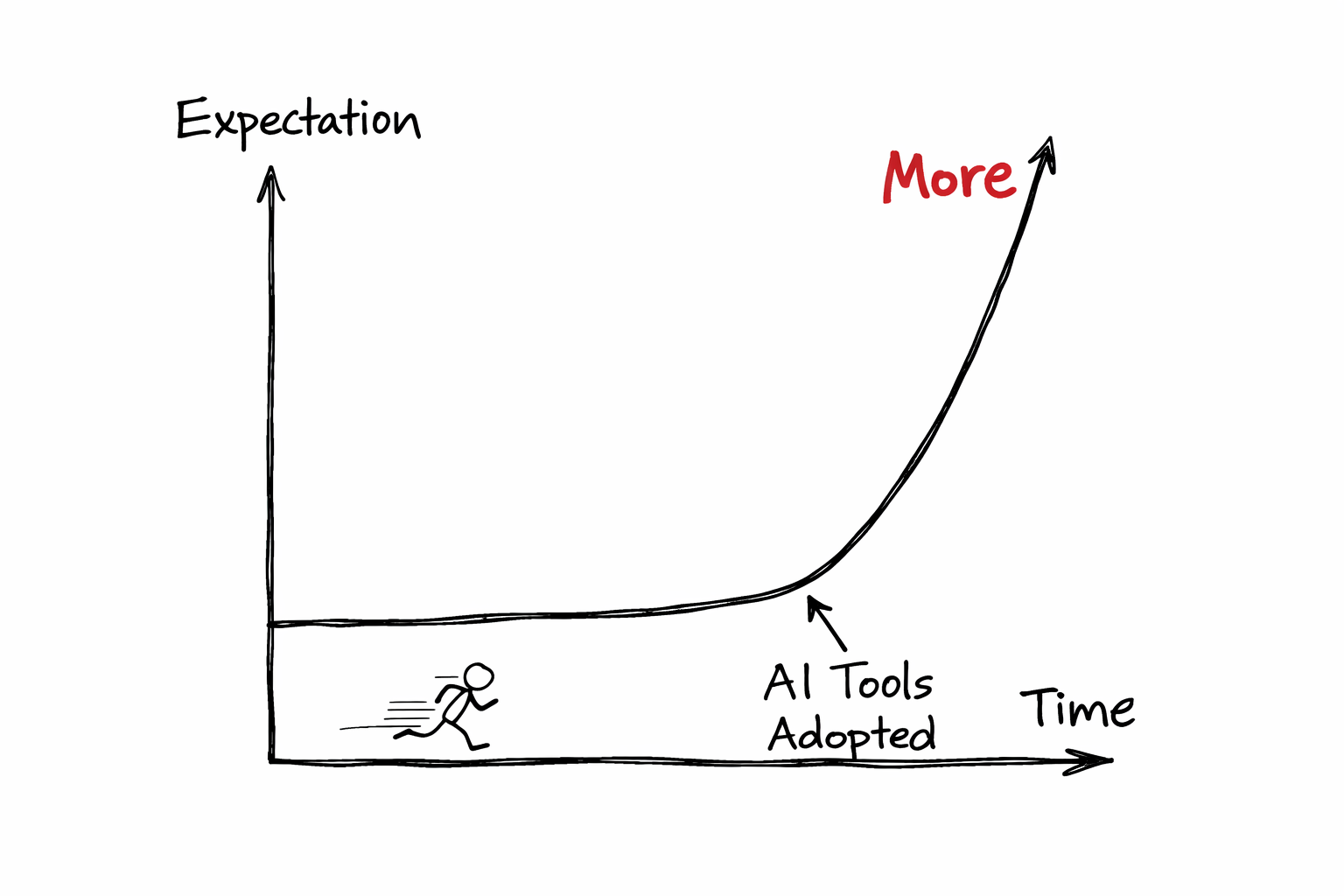

Here is the part that catches developers off guard.

When AI makes you faster, the world does not give you the time back. It raises the bar.

You used to ship a feature in a week. Now you can do it in two days. So now a week means three features. The sprint fills up. The roadmap gets more ambitious. Stakeholders who barely understood what a sprint was before now have opinions about velocity.

The developer did not become more relaxed. They became a busier manager of a faster machine.

This is the part of the AI productivity story that the hype cycle quietly skips. The efficiency gains do not flow back to the worker as rest. They flow upstream as expectation. The same thing happened with email. With smartphones. With every tool that made knowledge workers faster.

You are not getting your evenings back. You are getting harder problems with the same deadline.

Something else happened that I did not anticipate.

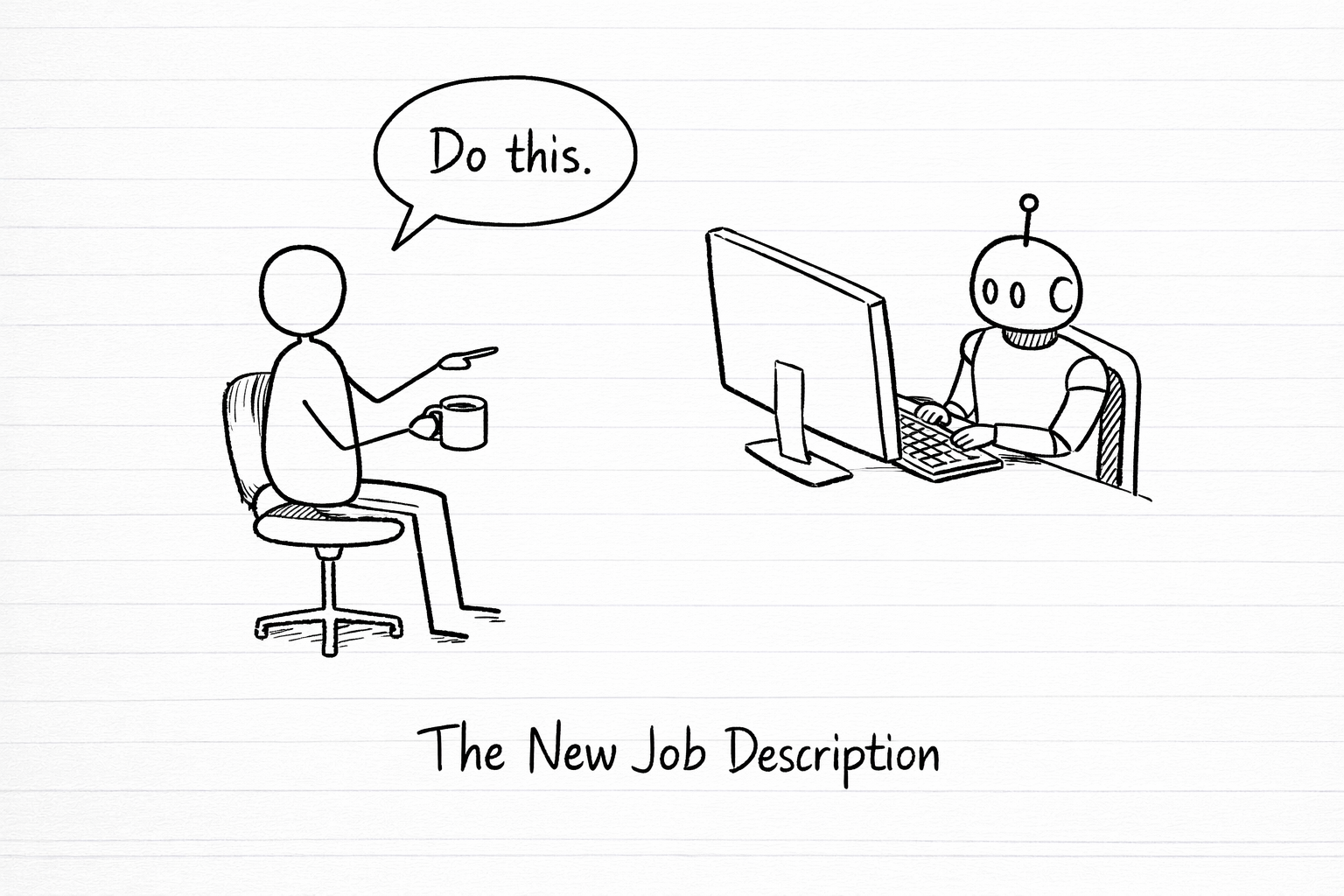

My job quietly changed shape without anyone officially changing my title.

I used to write code. Now I review code, direct code, correct code, and make decisions about code that something else drafted. I am less of a craftsperson and more of a technical director for a very fast and occasionally overconfident junior.

This is not a complaint. The output is genuinely better in many ways. But it requires a different kind of presence. I need to stay sharp enough to catch what Claude misses. I need to understand the system well enough to know when a suggestion that looks correct is actually subtly wrong for this specific context.

If I let my skills drift because the tool handles the surface level work, I lose the ability to evaluate the surface level work. And that is the trap.

The developer who stops reading code carefully because AI writes it well enough will eventually not be able to tell when AI writes it badly. The tool makes you dependent on the tool. That dependency is quiet and accumulates slowly.

I have been sitting with this question for months and I do not have a tidy answer. What I have is a working position that feels honest.

The senior developer who spent eight years learning Angular did not waste those years. They built something that cannot be replicated by a twenty minute session with a language model. They built judgment. The ability to evaluate what the model produces. The experience to know when something is technically correct but architecturally wrong. The pattern recognition that comes from having been burned by the things the model has never been burned by.

The junior who learns with AI is not getting the same education even if they are getting the same output. They are getting the answer without the struggle that builds the instinct. That might be fine for many kinds of work. It will not be fine for the work that requires genuine depth.

What I think is changing is not what a developer is. It is what a developer needs to protect.

You need to protect your thinking. The ability to reason from first principles, to hold a complex system in your head, to debug without a crutch. Because the moment you cannot do those things, you are not a developer who uses AI. You are a person who can prompt AI, which is a much more fragile thing to be.

The craft did not disappear. It moved upstream. It lives now in the decisions, the architecture, the judgment calls, the things that require having been wrong enough times to know what right actually looks like.

That is still yours. And no model trained on everyone else's code has it.

I will end with the uncomfortable part.

Some of the developers who are most threatened by AI are not threatened because the tool is too good. They are threatened because they were never really building the deeper thing. They were writing code without building judgment. Shipping features without understanding systems. Getting promoted on output without developing the thinking that makes output reliable.

AI did not create that problem. It revealed it.

And for the developers who were building the real thing, who were developing genuine depth and taste and judgment, AI is not the end of their craft. It is a filter. The thing that separates the people who understood what they were doing from the people who were good at looking like they did.

That is a brutal thing to say. I think it is true.

The identity question is not really about AI. It is about whether you were ever more than the code you wrote. If you were, you are still that. If you were not, that was always the problem.